Weekly Digest Issue #166, 22 March 2026

Standards are not merely bureaucratic "alphabet soup"; they are the invisible blueprints that determine whether a frontier technology serves as a foundation for growth or a trap for future failure.

Standards are the invisible infrastructure of AI.

Standards are not merely bureaucratic "alphabet soup"; they are the invisible blueprints that determine whether a frontier technology serves as a foundation for growth or a trap for future failure. We thrive when we can cooperate on the basics rather than constantly negotiating them.

Fundamentally, a standard represents the collectively agreed method for implementing technology. It is the specification that enables interoperability, such as allowing a nut from one manufacturer to fit a bolt from another. Historically, such specifications have quietly underpinned major human achievements.

Precedent demonstrates that a lack of early coordination leads to 'path dependence,' a condition in which societies become entrenched in inefficient systems due to prohibitive switching costs. The persistence of the QWERTY keyboard illustrates this phenomenon. Although initially designed to slow typists and prevent mechanical jams, it remains the prevailing standard despite alternative layouts offering significant efficiency improvements. The world had already invested in training a secretarial workforce; the path was set.

If we do not establish common communication protocols for AI agents now, as TCP/IP and HTML did for the internet, we risk creating "fragmented islands" of AI that cannot interact, mirroring the 120V/230V split in the digital realm. Laurie Locascio, head of the US National Institute of Standards and Technology (NIST), refers to standards as "the things you don’t think about... But oh, my God, you’re so glad they’re there." She recalls a Boeing official describing an airplane as "thousands of standards taking flight."

Given that artificial intelligence will impact domains ranging from scientific research to medical diagnostics, the demand for comprehensive standards is substantial. The following areas are particularly critical:

- Content Provenance: Establishing mechanisms to verify the origin and history of online information.

- Cybersecurity: Implementing standards such as SSL/TLS for AI-driven networks to safeguard against data breaches.

- Technical Protocols: Facilitating secure and reliable communication among diverse AI agents.

The complexity of standardisation can lead to inaction among policymakers, who may become overwhelmed by technical jargon or discouraged by the magnitude of the stakes involved. Narrative approaches can counteract this paralysis by demonstrating that technical decisions are fundamentally moral choices concerning collective safety.

The standardisation process requires modernisation

A significant tension exists between traditional bureaucratic processes and the rapid pace of artificial intelligence development. The International Organisation for Standardisation (ISO) notes that creating a single standard typically takes 3 years. In the frontier AI world, three years is an eternity. It represents several generations of model evolution. To avoid lagging behind, it is essential to accelerate the standardisation process. Persisting with lengthy development cycles risks perpetually codifying outdated technologies.

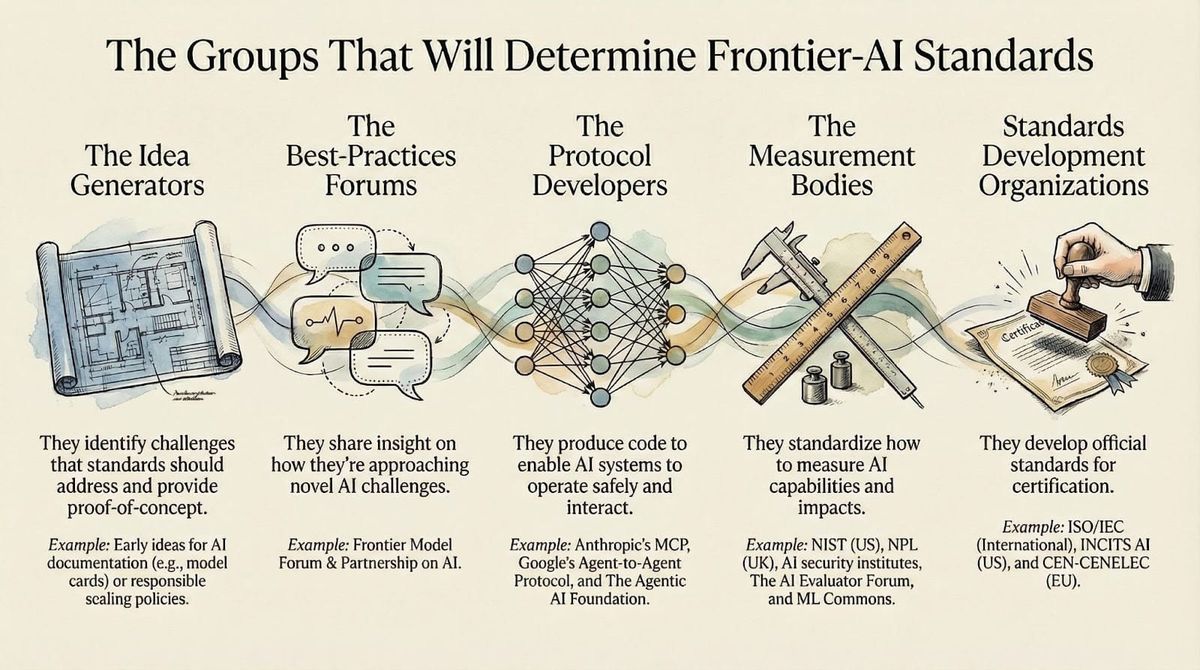

And, while perfect foresight is impossible, we can choose to cultivate "wise" standards that provide stability amid technological upheaval. We must look to the "Protocol Developers" and the "Measurement Bodies" such as NIST and ISO/IEC to firmly plant the seeds.

Beware the "Everything Bagel" Standard

When faced with a complex technology, there is a temptation to issue broad, aspirational mandates. For example, directives such as "make AI fair" are so vague that they lack actionable guidance, hinder innovation, and are ultimately disregarded.

Precision is the antidote. We must favour modular, specific standards that target distinct concerns, such as "combating the leaking of confidential data" or "LLM interoperability."Modularity also facilitates the correction of past errors. For instance, in 1886, U.S. railroad companies undertook a coordinated effort to address longstanding path dependence by converting 13,000 miles of track to a standard gauge within two days, enabling seamless national rail travel. Designing AI protocols as modular components that can be updated independently ensures that suboptimal standards can be revised without necessitating systemic overhauls.

📊 Infographics of the Week

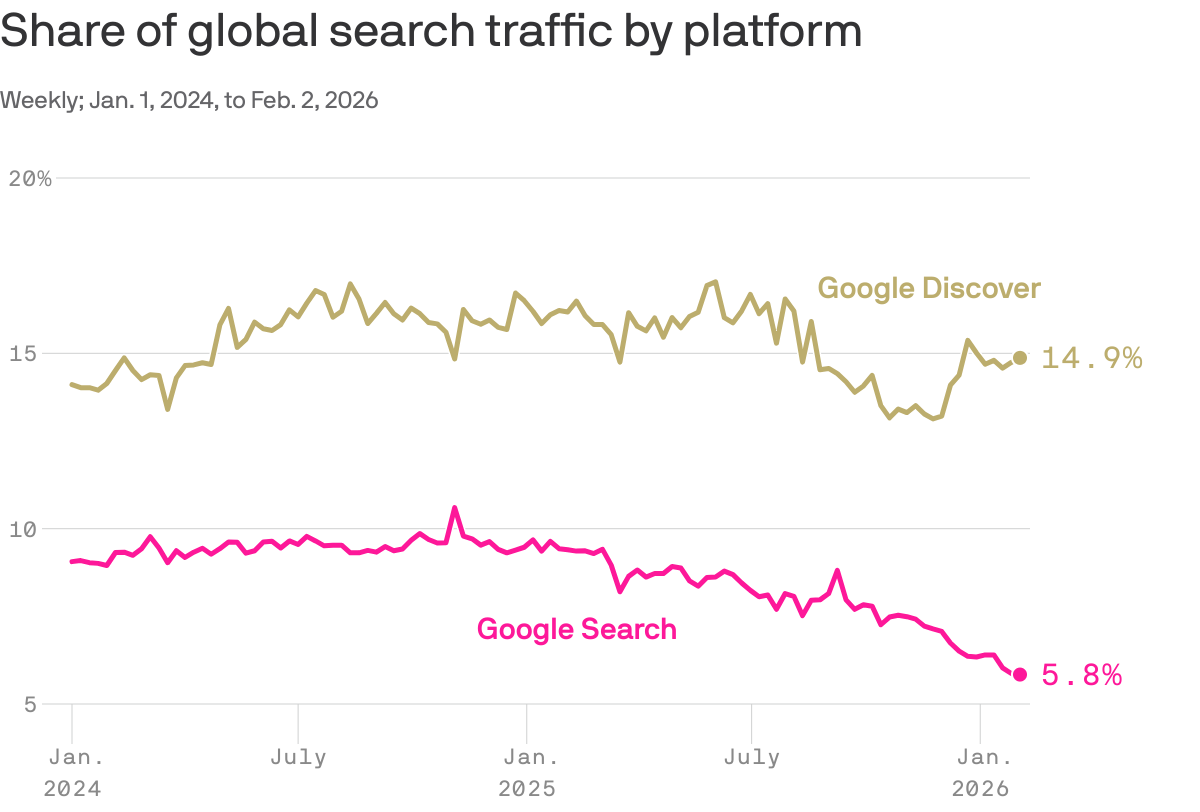

The Chartbeat data finds that news and media websites receive the highest overall number of page views from AI platforms, but the lowest engagement per individual article, suggesting readers rely on those sites to offer fact-checks or context within chatbots, but not deeper analysis.